MdSm 601

Introduction to Modeling & Simulation

MdSm 601

Introduction to Modeling & Simulation

Game

Theory

Based on: Avinash K. Dixit

& Barry J. Nalebuff, The Art of Strategy (W.W. Norton, 2008)

Part One

- “Game”—situation of strategic interdependence

- May be conflict (zero-sum; one’s gain is the other’s loss); more commonly, games are combination of mutually gainful (or harmful) strategies

- May be sequential or simultaneous

- Different games may have identical or very similar mathematical forms

- Part of the interdependence is the credibility of the players

- It is not always the case that more for one player means less for others

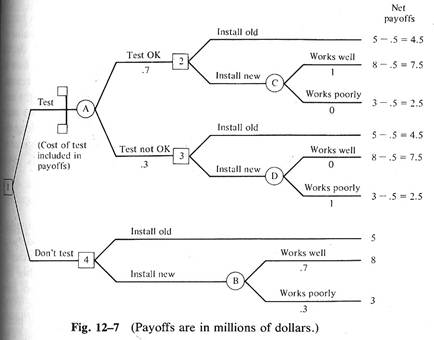

- Sequential games:

- use decision trees to analyze.

- Rule 1: Look forward & reason backward

- “Advantage of commitment:--limiting one’s own freedom of action in a sequential game to influence the other player’s actions

- To be “fully solvable” by backward reasoning, a sequential game must have

i. Absence of uncertainty

ii. Situation is completely determined (no chance determinants)

iii. Other’s objectives are known (but do not assume they are the same as yours)

- Problem of “strategic uncertainty” (How will the other player react?)

i. Need to balance “self-regarding” & “other-regarding” behavior

- The canonical form of the game:

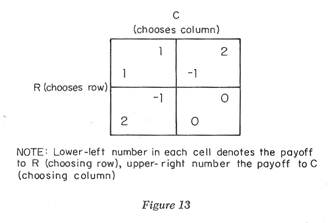

- Simultaneous games: Use a payoff matrix to calculate strategy.

- Payoff matrix—lay out all the consequences of all the combinations of the simultaneous choices.

- (See Gambit software, http://gambit.sourceforge.net and ComLabGames, http://www.comlabgames.com)

- Dominant strategy—a strategy that is better for a player than all other available strategies, no matter what strategy or strategy combinations the other player chooses.

- “Quasi-magical thinking” (Shafir & Tversky)—idea that by taking some action you can influence what the other will do, even if decisions are being made simultaneously.

- Rule 2: If you have a dominant strategy, use it.

- Conventional economic theory from Adam Smith on is wrong to assume that pursuing one’s own self-interest leads to an outcome that is best for all.

- “General theory of tacit cooperation in repeated games”—Cheating may gain one player a short-term advantage, but this harms the relationship and creates long-run costs.

- One Strategy for playing a Prisoners’ Dilemma

- Principles for effective strategy

i. Clarity

ii. Niceness

iii. Provocability

iv. Forgivingness

- Tit for tat: cooperate in first period and from then on mimic rival’s action from previous period.

i. At best, ties its rival

ii. Always comes close to rival strategies

iii. At worst, ends up getting beaten by one defection

iv. “Flawed” strategy

1. mistake “echoes” back & forth

2. will never accept punishment without hitting back—creates “cycles” or “reprisals”

3. no way to say “enough is enough”

- But cooperation occurs significantly often, even with one-time games.

- Women cooperate with each other more, less with men, and men least of all.

- “Contribution game”—initial stake, one can keep part, rest goes to common pool. Pool is then doubled and divided equally.

i. People will punish “social cheaters”

ii. prospect of punishment increases contributions in the first place

- How to achieve cooperation

i. detection of cheating (as immediate—and targeted—as possible)

ii. nature of punishment (external or intrinsic)

iii. clarity (boundaries and consequences should be clear from the outset)

iv. certainty (of both reward and punishment)

v. size (“principle of graduation”—let the punishment fit the crime)

vi. repetition (discounting of future benefits, likelihood of continuing relationship)

- Dilemma of collusion

i. Contributes to clarity among the players

ii. Unnecessary punishment among the players is avoided

iii. But it harms the general public’s interest

Chapter 4 A Beautiful Equilibrium

- Nash Equilibrium—a game outcome where the action of each player is best for him given his beliefs about the other’s action, and the action of each is consistent with the other’s beliefs about it.

- “Prominence”—where there are multiple Nash equilibria, one of the strategies must become more salient than the others.

- “focal point”—when players’ expectations converge on a prominence

- What is important is not that it is obvious to you, or to your partner, but that it is obvious to each that it is obvious to the others.

- As a result, equilibrium can easily be determined by whim or fad

- Studying Cases Using Nash Equilibira

- Simplify the analysis

i. Rule 3: Eliminate dominated strategies from consideration.

ii. Remove all dominated strategies and all “never best” strategies

iii. Look for “mutual best” response cells

iv. Rule 4: Look for an equilibrium, a pair of strategies in which each player’s action is the best response to the other’s.

v. If there is a unique equilibrium of this kind, there are good arguments why all players should choose it.

- Can be solved mathematically (in some cases) by solving multiple linear equations for their point(s) of intersection (“linear programming”).

- Begin with Nash equilibrium, then think of reasons why, and manner in which, outcomes may differ from Nash predictions.

i. Requires not only choosing best response based on belief about the other players’ actions, but also requires that those beliefs are correct!

Part Two

- If you follow any system or pattern in your choice of strategy, it will be exploited by the other player to his advantage and to your disadvantage.

- The value of randomization can be quantified (soccer free-kick example)

- In a strict zero-sum game, one should attempt to

i. Minimize the opponent’s maximum payoff (minimax), while

ii. The opponent attempts to maximize his own minimum payoff (maximin)

iii. If both do this, minimax equals maximin (vonNeumann

-Morgenstern theorem—which is a special case of the Nash equilibrium)

i. seize the first-mover status to declare a planned course of action

i. “Commitment”—unconditional strategic move that restricts one’s future actions

1. “Threat”—response rule that punishes players who fail to act as desired

2. “Promise”—response rule that offers to reward others who act as desired

iv. Response rules that do not promise a change in behavior may be

1. Warning—showing a self-interest to carry out a threat

2. Assurance—showing a self-interest to carry out a promise

iii. It is never advantageous to allow others to threaten you

i. Distinction between threat & promise depends on what is called the status quo

1. a compellent threat is like a deterrent promise, with a change of status quo.

a. Deterrence has no timeline, and can be achieved more simply and better than a threat.

ii. Certainty--The other player must believe the threat or promise

1. This need not deny the presence of risk

2. May be implemented in multiple small steps (to avoid salami tactics).

3. Keep threats at the smallest level needed to keep them effective (“principle of graduation”)

4. Brinksmanship—start small, but deliberately and gradually raise the size of the threat (risk)

a. Increase the risk of the (same) bad thing happening

b. Most threats carry a risk of error, and therefore an element of brinksmanship

c. Even with the best of care, brinksmanship may fail (the bad thing may actually happen)

Ch.

7 Making Strategies Credible

i. Write contracts to back up your resolve

1. enforcing party must have some independent incentive to do so

ii. Establish and use reputation

i. Cut off communication (can make action truly irreversible)

iii. Leave the outcome beyond your control (or even to chance)

1. lessen the risk from breaking the contract

2. use when large degree of commitment is infeasible

3. to avoid unraveling of trust, there should be no clear final step

i. Develop credibility through teamwork

ii. Reputation (you can neutralize the other’s reputation by keeping it secret)

iii. Communication (one can be unavailable to receive a communication)

v. Moving in steps (resist in small steps—salami tactics)

vi. Mandated agents (refuse to deal with the agent and demand to speak to the principal)

Ch.

8 Interpreting and Manipulating

Information

- Why not rely on others to tell the truth? The answer is obvious: because it might be against their interests.

- The greater the conflict, the less the message can be trusted

- “Second level deception”—lying by telling the truth

- Actions speak louder than words. Watch what the other play does, not what he or she says.

- So, each player will try to manipulate actions for their information content.

- “Signaling”--Choosing actions that promote favorable leakage

- “Signal jamming”—Choosing actions that reduce or eliminate unfavorable leakages

- “Screening”—Setting up a situation where the person would find it optimal to take one action if the information was of one kind, and another action if it was of another kind.

- To be an effective signal, an action should be incapable of being mimicked by a rational liar

i. It must be unprofitable when the truth differs from what you want to convey

ii. Action serves to discriminate between possible types

- People have better information about their own risks than do their potential partners (e.g., health insurance)

- “Adverse selection”—Groucho Marx effect. “I wouldn’t want to belong to any club that would have me.”

- “Positive selection”—Capital One strategy. No one but the type of person you want would be interested.

- Examples in use:

ii. Benefits in kind can serve as a screening device

ii. Separating equilibrium—one type signals and others do not, thus distinguishing the types.

2. Bayes’ Rule is used to calculate probabilities.

- Price Discrimination by Screening

- Create different versions of the same good and price the versions differently.

- “Incentive compatibility constraint”—Cannot charge full willingness to pay for higher-priced good (since there is a benefit to defecting to lower-priced good)

- “Participation constraint”—Cannot raise lowest price higher than that type’s willingness to pay

Ch.

9 Cooperation and Coordination

- Uncoordinated choices can interact to produce a poor outcome for society. Even in a situation of relative (rather than absolute) performance, the game does not have to be zero-sum.

- “Scope of gains” can be increased by reducing the inputs.

- But this would require an enforceable collective agreement

- It is difficult to arrange a self-enforcing cartel; it is better if an outsider enforces the collective agreement (such as limiting competition)

- One can use the market to charge people for the harm (or negative benefit) they cause to others

- There are often three equilibria—one at either pole of the choices, and a mid-range. The social dynamics that drive toward one of the extremes is called “tipping”

i. Guarantees can be offered to offset social dynamics and stabilize a mid-range equilibrium

i. Sometimes preferable to consider reforms only as a package rather than a series of small steps

i. Everyone makes the same choice, but it is the wrong one

iii. Choice may lead to an extreme equilibrium, rather than a more desirable mid-range

i. Choices lead stepwise down a wrong path

ii. Excessive homogeneity (mutually reinforcing expectations)

Ch.

10 Auctions, Bidding, and Contests

i. Bid until the price exceeds your value, then drop out

ii. Problem is to determine “value”

1. private value—independent of others’ assessment

i. All start as bidders, and drop out as value is passed.

ii. Winning bidder pays the value at which the pentultimate bidder dropped out.

i. All bids are sealed. Winning bidder pays price of second highest bid.

ii. All bidders have a dominant strategy—bid their true valuation

iii. Implications of Vickrey Auctions

2. Online auctions (“proxy bidding”)

3. “Sniping”—wait until last minute to enter best proxy bid

4. Attempt to gain strategic advantage by withholding information about one’s true valuation

5. Sniping may be explained by people not knowing their own valuation.

iv. “Winner’s curse”—winning a bid and discovering it isn’t worth what one thought

i. Seal bid in envelope. Highest bid wins.

ii. Never bid your valuation (or, worse, more than your valuation)

1. It guarantees that you break even at best

i. Auction starts with high price that declines; first bidder to stop the decline pays that price.

- Implications

- “Revenue equivalence theorem”—When valuations are private and game is symmetric, seller makes same amount of money whether auction is English/Japanese, Vickery, Dutch, or sealed-bid

- Optimal bidding strategy is to bid what you think the next-highest person’s value is, given the belief that you have the highest value.

- As the number of bidders increases, the market approaches perfect competition and all of the surplus goes to the seller

- Many games that might not look like auctions turn out to be one:

i. Pre-emption game—First person to launch has a chance to own the market, provided they succeed.

1. wait until you are fully ready and miss the opportunity

ii. War of attrition—instead of who goes in first, game is who gives in first

iii. Case study of Spectrum Game

1. opportunity for tacit cooperation

3. can employ punishment/cooperation that would otherwise be impossible without explicit collusion

4. Moral: If you don’t like the game, look for the larger game.

- All other things equal, looking ahead and reasoning backward leads to a simple rule: Split the total down the middle. But…

- Cost of waiting—the better a party can do by itself in the absence of an agreement (it’s BATNA—“Best Alternative To Nothing at All”), the larger its share of the bargaining pie will be

- Size of the pie (the “total”) is measured by how much value is created when the two sides reach an agreement compared to when they don’t (value above the sum of the BATNA’s)

i. Talmudic principle of the disputed garment

ii. If BATNAs are not fixed, opens up strategy of influencing the BATNAs.

i. Different ideas about what constitutes success

ii. Strikes are an example of signaling

iii. “Virtual strike” can work while both sides are still talking.

v. Rubinstein Bargaining: stepwise negotiation, taking into account the value of time delay.

2. The lower the cost to waiting, the less the advantage of going first.

i. It’s okay to speak the truth when it doesn’t matter.

iii. Anyone’s vote affects the outcome only when it creates or breaks a tie.

- Naïve voting—but in three-way (or more) race, no determinative outcome.

- Condorcet rules—each pair of candidates competes in a pairwise vote. Winner has the smallest maximum votes against.

- Voting cycle: the order in which votes are taken can determine final outcome.

- Voters

- To distinguish your opinion from the pack, the trick is to take the most extreme stand consistent with still appearing rational

- People have an incentive to tell the truth about direction but to exaggerate the intensity of their values. This is a variant on “split the difference,” where everyone has an incentive to begin with an extreme position for settling disputes.

- No voter will take an extreme position if the candidate follows the preferences of the median (not the average) voter

i. However, only applicable when choice is one-dimensional.

- Majority vote (50% +1) can be easily destabilized; 2/3 majority is stable because the average of all preferences will be 36%.

- “Approval voting” (may vote for as many candidates as one wishes) permits voters to express true preferences but still permits a rule (majority of votes cast, limited number of slots to fill) for arriving at a resolution.

- Incentive payment schemes must cope with the problem of “moral hazard” (creating a perverse incentive to do something unacceptable)

- A fixed-sum does not handle the “incentive” component very well

- Pure piece-rate does not handle the participation constraint (how much could have been earned in other opportunities)

- System must also be simple enough to be understood.

- Writing an incentive contract

ii. Linear payment is more robust, less prone to manipulation, than proportional

ii. Average payment must meet the participation constraint

i. If tasks are substitutes for each other, strong incentive to one will hurt the effort in another.

iii. If tasks are complements, a single incentive affect performance in both

v. Overall strength of incentives is inversely proportional to the number of different supervisors

i. Keeping records of individual strings of success/failure

ii. Have several experts working on related projects